We at We Are Voice realized the need to have a React Native app for our choral music service. In that process, Callstack became a natural development partner for us given their deep knowledge of the platform. Despite the geographical distance, our collaboration has been successful with strong deliveries on time, together with a good communication.

In brief

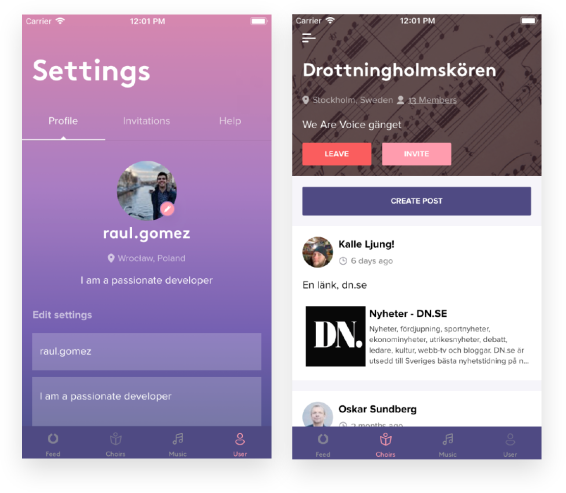

An app that allows choral singers to work on their arrangements with voice files and notes

Challenges

The main problem laid in the complexity of one of the main application requirements, the music service.

Given the music player specifications, we were challenged to prove that React Native has no limits when it comes to build mobile applications, no matter how complex they are. Performance and cross-platform were a must, so we needed to deal with Android and iOS native audio APIs, implement multi-channel audio streaming, support searching and come up with an unified API exposed to JavaScript.

The other part of the system was the score SVG, that represented the notes and voices for a particular arrangement and had to work seamlessly and harmonically with the music player, taking things like the tempo and highlighting the notes into account. Last but not least, the player had to work in an offline mode, being able to send out analytics data regardless the network status.

Native module vs Native UI Component

That was one of the cases when bridging the native code to JS was necessary, forcing us to write different ad-hoc code for Android (in Kotlin) and iOS (in Objective C) for the audio streaming.

In React Native, there are two different approaches to expose functionality to JavaScript - a native module or a native UI component.

Going with the Native UI Component

Native modules are inherently imperative, whereas UI components are declarative. React presents a declarative model, giving us a chance to express UI as a function of state and props. But it doesn’t have to be UI at all.

The power of React Native Animated API and Interpolation

In fact, we can take any imperative API and encapsulate it on a declarative component. That’s the beauty of React. We have chosen the approach of using a native UI component, which allowed us to remove one of the most important constraints in the music player implementation - time.

Highly performant music player on both Android and iOS

Having a possible further development in mind, we’ve left an open door for the possibility of decoupling the player from the app, to make it a standalone package that lets us test songs in isolation.

We have proved that React Native is ready for the high stakes after being put under the test and coming up with a high performant music player that works seamlessly on both Android and iOS. Also, the high predictability of the system is another win, thanks to the declarative approach we have taken.

As a result, we have developed an application that has around 20k active users and growing, with a rating on Android Play Store of 4.7/5.

Power of React Native Animated API and Interpolation

We had two different pieces that worked separately, the music player and the SVG score. How did we set the whole system in motion? With the power of React Native Animated API and Interpolation. A native scrollview component exposes a callback in JS that fires every time we get new scroll positions, but the scrolling occurs on the native side. Source

If you think about it, a music player can be analyzed with the same mental model, just by replacing scroll positions with timestamps. It means that by leveraging Animated API and Interpolation in particular, we can define all the system motion upfront, just once, declaratively. Hooking up native-JS events with Animated.event we can define all the relationships with different interpolations over this.state.progress (score, vertical tempo bar, progress bar...). Moreover, if we use the right transformations, such as transforms, we can offload all the animations to the native driver, relieving the JS thread. Source

For solving the SVG scaling issue, we decided to integrate a proxy serverless lambda in order to wrap the SVG into an HTML document, so we can control the meta tags added and avoid render inconsistencies between iOS and Android, that could have arose otherwise.

If we had chosen a Native Module instead of a Native UI Component, we couldn’t have tackled the problem that way and the state machine of the music player would be way more complex.

We Are Voice is an initiative that makes singing together easier and more fun. With an application that offers the singers the possibility of working on their arrangements with voice files and notes, the company aims to facilitate the everyday life of choir singers and choir leaders, by combining a communication system and streaming music service.

The challenges we’ve solved so far

get in touch

Fill in the form and tell us a little bit about your enquiry. We’ll get back to you promptly to discuss your requirements.

.webp)

.webp)